|

Popularity of text encodings

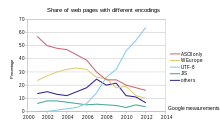

A number of text encoding standards have historically been used on the World Wide Web, though by now UTF-8 is dominant in all countries, with all languages at 95% use or usually rather higher. The same encodings are used in local files (or databases), in fact many more, at least historically. Exact measurements for the prevalence of each are not possible, because of privacy reasons (e.g. for local files, not web accessible), but rather accurate estimates are available for public web sites, and statistics may (or may not accurately) reflect use in local files. Attempts at measuring encoding popularity may utilize counts of numbers of (web) documents, or counts weighed by actual use or visibility of those documents. The decision to use any one encoding may depend on the language used for the documents, or the locale that is the source of the document, or the purpose of the document. Text may be ambiguous as to what encoding it is in, for instance pure ASCII text is valid ASCII or ISO-8859-1 or CP1252 or UTF-8. "Tags" may indicate a document encoding, but when this is incorrect this may be silently corrected by display software (for instance the HTML spec says that the tag for ISO-8859-1 should be treated as CP1252), so counts of tags may not be accurate. Popularity on the World Wide Web  UTF-8 has been the most common encoding for the World Wide Web since 2008.[2] As of January 2025[update], UTF-8 is used by 98.5% of surveyed web sites (and 99.2% of top 100,000 pages and 98.8% of the top 1,000 highest-ranked web pages), the next most popular encoding, ISO-8859-1, is used by 1.1% (and only 14 of the top 1,000 pages).[3] Although many pages only use ASCII characters to display content, very few websites now declare their encoding to only be ASCII instead of UTF-8.[4] Virtually all countries and over 97% all of the tracked languages have 95% or more use of UTF-8 encodings on the web. See below for the major alternative encodings: The second-most popular encoding varies depending on locale, and is typically more efficient for the associated language. One such encoding is the Chinese GB 18030 standard, which is a full Unicode Transformation Format, still 95.7% of websites in China and territories use UTF-8[5][6][7] with it (effectively[8]) the next popular encoding. Big5 is another popular non-UTF encoding meant for traditional Chinese characters (though GB 18030 works for those too, is a full UTF), and is next-most popular in Taiwan after UTF-8 at 96.7%, and it's also second-most used in Hong Kong, while there as elsewhere, UTF-8 is even more dominant at 98.3%.[9] The single-byte Windows-1251 is twice as efficient for the Cyrillic script and still 95.6% of Russian websites use UTF-8[10] (however e.g. Greek and Hebrew encodings are also twice as efficient, and UTF-8 has over 99% use for those languages).[11][12] Korean, Chinese and Japanese language websites also have relatively high non-UTF-8 use compared to most other countries, with Japanese UTF-8 use at 98.6% the rest use the legacy EUC-JP and/or Shift JIS (actually decoded as its superset Windows-31J) encodings that both are used about as much.[13][14] South Korea has 94.8% UTF-8 use, with the rest of websites mainly using EUC-KR which is more efficient for Korean text. Popularity for local text filesLocal storage on computers has considerably more use of "legacy" single-byte encodings than on the web. Attempts to update to UTF-8 have been blocked by editors that do not display or write UTF-8 unless the first character in a file is a byte order mark, making it impossible for other software to use UTF-8 without being rewritten to ignore the byte order mark on input and add it on output. UTF-16 files are also fairly common on Windows, but not in other systems.[15][16] Popularity internally in softwareIn the memory of a computer program, usage of UTF-16 is very common, particularly in Windows but also cross-platform languages and libraries such as JavaScript, Python, and Qt. Compatibility with the Windows API is a major reason for this. Non-Windows libraries written in the early days of Unicode also tend to use UTF-16, such as International Components for Unicode.[17] At one time it was believed by many (and is still believed today by some) that having fixed-size code units offers computational advantages, which led many systems, in particular Windows, to use the fixed-size UCS-2 with two bytes per character. This is false: strings are almost never randomly accessed, and sequential access is the same speed in both variable- and fixed-size encodings. In addition, even UCS-2 was not "fixed size" if combining characters are considered, and when Unicode exceeded 65536 code points it had to be replaced with the non-fixed-sized UTF-16 anyway. Recently it has become clear that the overhead of translating from/to UTF-8 on input and output, and dealing with potential encoding errors in the input UTF-8, overwhelms any benefits UTF-16 could offer. So newer software systems are starting to use UTF-8. The default string primitive used in newer programing languages, such as Go,[18] Julia, Rust and Swift 5,[19] assume UTF-8 encoding. PyPy also uses UTF-8 for its strings,[20] and Python is looking into storing all strings with UTF-8.[21] Microsoft now recommends the use of UTF-8 for applications using the Windows API, while continuing to maintain a legacy "Unicode" (meaning UTF-16) interface.[22] References

|